Google’s AI Mode is one of the search giant’s most transformational UX moves in its 25-year existence. It turns searches into conversations that are more natural-language-oriented and progressive in nature. In other words, they build on themselves through successive follow-up questions.

This flips the script for the search user experience, as well as Google’s monetization model (more on that in a bit). It does this by making search more conversational. You can ask Google about the best screws to use in a concrete wall, then follow up to ask what type and size drill bit you need for pilot holes.

Since Google launched AI Mode – then released it widely last month – it has refined the user experience and other dynamics. And because it’s AI-based, it will gain functionality and intelligence as time goes on, and as it gets smarter. This is one of the marks of software products in the AI age.

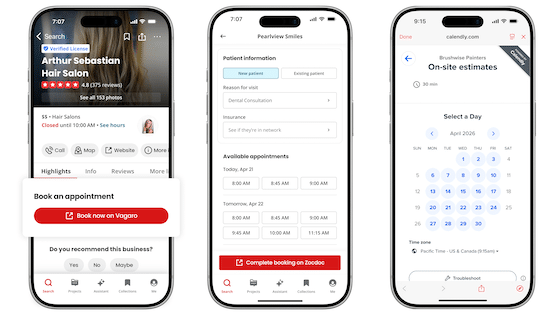

The latest update to AI Mode doubles down on its central conversational component. Specifically, it’s voice-enabled. With the new Search Live integration, users can launch open-ended voice dialogues with AI Mode on their mobile devices. This is accessible in the Google app by tapping the “Live” icon.

Inputs for Infered Intent

The addition of voice conversations opens things up a bit for Google. For example, it creates more use cases and environments where AI Mode is applicable. These will align with the same scenarios where users currently utilize standard voice search – everything from driving to shopping while on the go.

In fact, this could be where AI Mode really finds its groove, as opposed to using it on your desktop. The conversational interface at the heart of AI Mode is perhaps more natural when spoken versus typed. And just like we discovered early in the smartphone era, the queries themselves will vary in nature.

That last part is all about user intent. When you ask questions on the go, your needs may be different than when at your desk. The classic local search example is that a search for McDonald’s on your mobile device is likely looking for the closest location. The same search on your desktop may be different.

This longstanding local search paradigm will carry into Google’s AI era. But with AI Mode, it has more inputs to infer your intent. “More inputs’ is meant literally as natural-language search queries have more words than the keyword-based “caveman” style of search we’ve been trained on (e.g., “food near me”).

Quality over Quantity

Because inferred intent is Google’s jam, AI Mode’s query richness is one of the things it likely designed into the program. Indeed, it could offset some of the very real downsides of AI Mode for Google. That gets us to the elephant in the room noted earlier: AI Mode upends Google’s SERP-based ad model.

In other words, ad inventory will decline in a world that’s no longer built around 10 blue links and a few sponsored ad slots. With AI search, it’s instead giving you the one right answer to your question. But AI Mode expands that with more search inputs (word count) and more searches (follow-up queries).

That gets to the quantity/quality tradeoff we’ve examined in the past. Google will surely lose quantity in terms of ad inventory, as noted. But can it counterbalance that with greater quality? In other words, with greater dimension and context in users’ natural-language queries, can it better pinpoint your intent?

And with that more granular intent, can it serve sponsored content that’s higher quality and more likely to resonate? That higher quality translates to premium pricing for advertisers. So the ultimate question is if fewer high-premium ads yield more aggregate revenue than a greater quantity of cheaper ads.

Offers vs. Ads

“Ads” is probably the wrong word. In this transformation of Google’s revenue model, the right framing may be “offers,” as argued by Yext Chief Data Officer, Christian Ward. These could be offers that are generated in real time and priced in a real-time bidding style to better connect buyer and seller.

Most of the above was already the case with AI Mode – or at least our speculation around where it could go. But the latest (albeit predictable) integration/addition of voice conversations will thicken the plot. Remaining questions include how offers will be served – visually or audibly – in a voice context.

The answer may be some combination of the two. Audible ads or offers in the middle of your on-the-go AI Mode dialogues may degrade the UX. But Google could save that knowledge and serve ads/offers on your phone’s visual interface, or at a later time that’s more fitting (e.g., next time you’re at your desk).

That last option could be advantageous. In fact, one of AI-Mode’s benefits is to be able to return to your conversational history and reference past dialogues. That could be one place where offers make sense. And Google will use all those cumulative interactions for user profiles and ongoing offer targeting.

Of course, this is all very early stage and wide open. It could go in several directions, so we’ll be watching (and listening) closely.